What is CTEM? Why Vulnerability Management Alone No Longer Covers the Attack Surface

Updated April 2026

Most breaches do not start with a vulnerability. They start with access you already exposed.

This pattern shows up consistently across real incidents.

Security teams measure what they can see. Attackers exploit what they can reach.

The difference between those two is what I would describe as the visibility gap:

the distance between what security tools report and what an attacker can actually access in practice.

Vulnerability scanners surface known weaknesses.

They do not show whether those weaknesses are reachable, whether controls actually stop them, or whether access already exists through identity, configuration, or process failures.

Continuous Threat Exposure Management (CTEM) exists to close that gap.

What is CTEM?

Continuous Threat Exposure Management (CTEM) is a security approach that continuously identifies, prioritises, and validates attack paths across an organisation’s environment. It focuses on what an attacker can actually reach, including vulnerabilities, identity exposures, misconfigurations, and control failures, rather than just known CVEs.

The difference becomes clearer when you compare what each approach is designed to answer:

| Area | Vulnerability Management | CTEM |

|---|---|---|

| Core focus | Known vulnerabilities (CVEs) | Full attack paths |

| Scope | Software weaknesses | Identity, misconfigurations, and control failures |

| Approach | Periodic scanning | Continuous exposure discovery |

| Output | List of vulnerabilities | Exploitable paths and risk scenarios |

| Prioritisation | CVSS and severity scores | Real-world exploitability in your environment |

| Key question | What is vulnerable? | What can an attacker actually reach? |

Both approaches matter. Vulnerability management tells you what is broken. CTEM tells you what is exposed.

Credential abuse was the leading initial access vector in 22% of breaches in 2025, with the human element present in 60% of all incidents (Verizon DBIR 2025). None of those entry points have CVE numbers. A vulnerability scanner would report nothing for a helpdesk process with no callback verification, as seen in multiple Scattered Spider attacks, a Global Administrator account with no PIM enforcement, or an npm package maintainer who handed over publishing rights through social engineering.

These are not edge cases. This pattern shows up across real incidents.

This article covers what CTEM is, how it differs from vulnerability management, and how to start applying it without a platform purchase.

How CTEM Works

Continuous Threat Exposure Management is a security programme approach that moves beyond point-in-time assessments to continuously map, prioritise, and validate exposures across every part of the attack surface. Gartner coined the term in 2022, defining it as a systematic process that gives organisations a consistent way to identify which exposures matter most and verify that controls are working as designed.

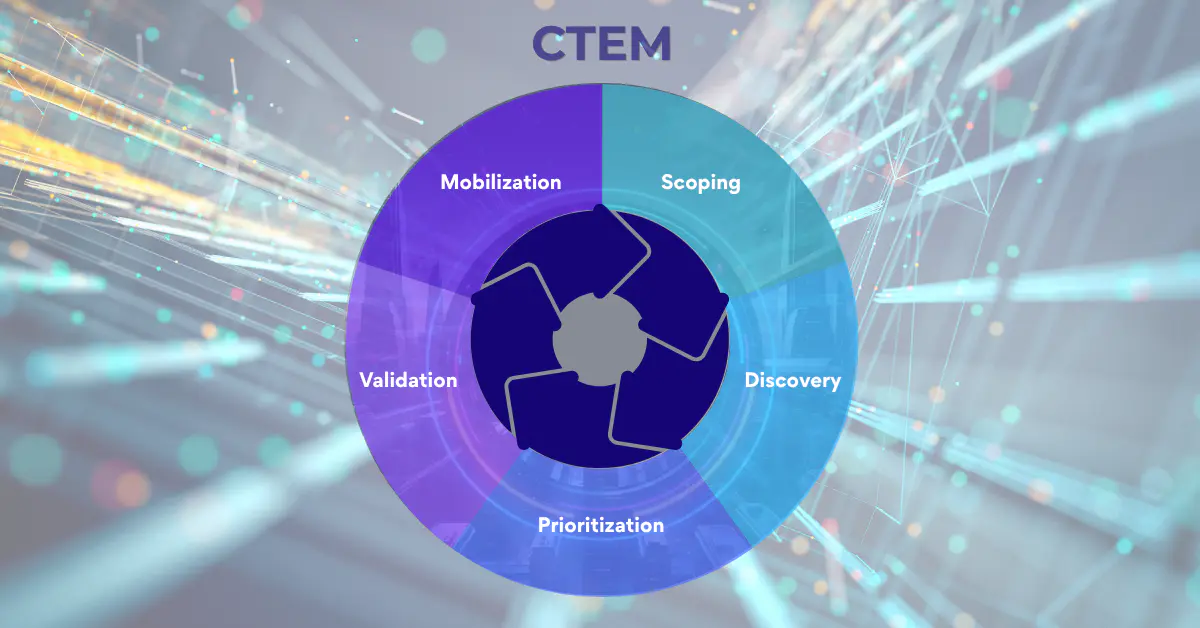

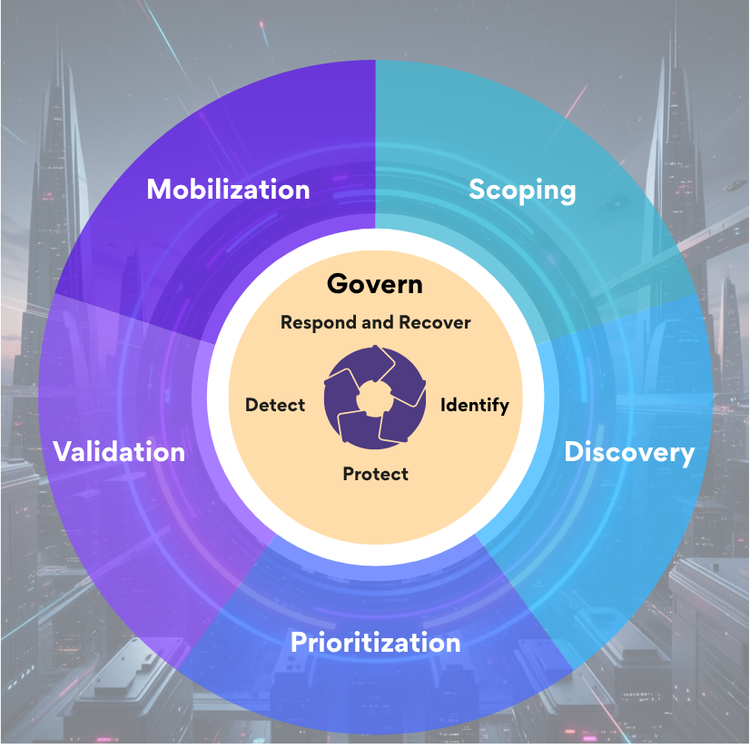

The five stages are scoping (defining what is in scope and what an attacker would target), discovery (continuously identifying exposures across that surface), prioritisation (ranking by actual exploitability in your environment rather than generic CVSS scores), validation (testing whether your controls stop the attack paths identified), and mobilisation (coordinating remediation across the teams who own the risk).

What distinguishes CTEM from a vulnerability management programme is the scope of what counts as an exposure. An unpatched CVE is an exposure. A misconfigured admin portal with no conditional access policy is an exposure. An over-permissioned service account that has not been reviewed since a merger three years ago is an exposure. An identity provider with MFA not enforced on admin accounts is an exposure. None of those last three have CVE numbers. Most vulnerability scanners will not surface them.

CTEM treats all of them as part of the same risk picture.

What that means in practice is worth stating directly. In more cases than most organisations would want to admit, the root cause of a breach is not an unpatched vulnerability or a missing control. It is a control that was deployed but never worked correctly, or stopped working and nobody noticed. EDR running in audit mode because nobody changed the default after rollout. OS hardening policies applied to part of the estate and never completed. A technician disabling a security control to troubleshoot a software issue, the issue gets resolved, they move on, and the control stays disabled until something bad happens months later. None of these appear as vulnerability scanner findings. They are configuration and control effectiveness failures: exactly the category CTEM's discovery and validation phases exist to surface.

You Can Be Patch-Perfect and Still Be Breached

In March 2026, an Iran-linked group called Handala executed a mass device wipe against Stryker, a $25 billion medical device company. No malware. No CVE. No zero-day exploit.

The attackers accessed the Microsoft Intune management console and issued a remote wipe across enrolled devices, impacting offices in dozens of countries. Stryker confirmed significant disruption in its SEC filing. Patch status was irrelevant. The failure was not in software, but in a privileged access model where standing Global Administrator roles and no Multi-Admin Approval meant one compromised account was enough.

The controls that would have changed the outcome are straightforward: Privileged Identity Management to remove standing privilege, Multi-Admin Approval on destructive actions, and Conditional Access for admin access. None of these are patch management. All of them are CTEM-relevant exposures.

Scattered Spider used the same approach against MGM Resorts in 2023. Variations of this playbook have been repeated across multiple targets. A ten-minute helpdesk call resulted in full administrative access. No exploit. No malware. No technical skill at the point of entry.

The attacker impersonated an employee identified via LinkedIn and had credentials reset before anyone flagged the request. For a full breakdown, see the Scattered Spider analysis.

The npm ecosystem shows the same pattern. Maintainer account attacks going back to 2018 follow identical logic: no vulnerability, no CVE, just compromised credentials or a voluntary handover of publishing rights. This is not a one-off. Axios, used in 80% of cloud environments, was compromised in March 2026 after two weeks of social engineering. Clean vulnerability scan. Breached.

Three incidents. Three industries. Repeated patterns. The same conclusion: a vulnerability scanner would have returned green while all of this was happening.

These are not isolated cases. They follow the same pattern.

The organisation had visibility into vulnerabilities.

It did not have visibility into what an attacker could actually reach.

That is the visibility gap in practice.

The visibility gap exists across three layers:

- Surface visibility → what scanners report (CVEs, assets)

- Access reality → what identities, permissions, and configs allow

- Control effectiveness → whether defenses actually stop anything

CTEM exists to connect all three.

What Percentage of Breaches Start With a Vulnerability?

The 2025 Verizon Data Breach Investigations Report, covering 22,052 security incidents and 12,195 confirmed breaches, makes the distribution of initial access vectors clear.

Credential abuse was the leading initial access vector at 22% of breaches. Vulnerability exploitation followed at 20%, with phishing at 16%. The human element was present in 60% of all incidents (Verizon DBIR 2025). Third-party involvement in breaches doubled to 30%, up from 15% the prior year.

The vulnerability exploitation figure requires context. It increased 34% year-on-year, which is significant, but the report is specific about where: edge devices and VPNs. Internet-facing perimeter systems that are exposed by design. That is not the broad internal software estate that traditional vulnerability management scans. It is the attack surface at the boundary, where the average time to patch is 209 days and the average attacker time-to-exploitation is five days (Verizon DBIR 2025, Tenable).

That gap of more than 200 days is where organisations are most exposed. And it is a gap that CTEM's continuous discovery and prioritisation is specifically designed to close.

But even setting edge device exploitation aside, the majority of initial access is happening through vectors that do not appear on a CVE list at all. Credential abuse. Phishing. Social engineering. Misconfigured third-party access. These are not software vulnerabilities. They are attack surface elements that require a different kind of visibility to detect.

Why Vulnerability Management Became the Default (And Why the Threat Picture Changed)

Vulnerability management was the right answer to the right problem.

Before it was taken seriously, malware infections were treated as a nuisance rather than a security event. Early in my career, triaging malware infections was seen as a distraction, something that slowed devices down and interrupted users, not a critical operational concern. There was no zero-tolerance response. No escalation path. Infections got cleaned up and people moved on.

That changed as malware became more sophisticated and started having real operational consequences. When ransomware arrived and organisations began losing access to their own systems, paying significant sums to recover data, or suffering days of downtime, the industry had to take it seriously. Traditional IT roles evolved into security functions. Boards started asking questions. Budgets shifted.

The same pattern played out with patching. Conficker in 2008 infected an estimated 9 to 15 million systems because organisations had not applied a Microsoft patch that had been available for months. WannaCry in 2017 caused an estimated $4 billion in damage globally exploiting EternalBlue, a vulnerability for which a patch had been released 59 days before the attack. The industry's response was correct: invest in vulnerability management, enforce patch cadence, close the known-CVE gap. It worked. Patching hygiene improved substantially across the following decade.

The problem is the lesson calcified. The mental model of breach equals unpatched vulnerability embedded itself in boardrooms, in compliance frameworks, in how security budgets are justified, and in how success gets measured. Conficker and WannaCry became the reference points for why security investment matters, and they remained the reference points long after the threat picture had changed.

Attackers adapted. They found that compromising a person is faster, cheaper, and more reliable than finding an unpatched CVE. They found that misconfigured cloud environments are more accessible than hardened endpoint fleets. They found that the identity layer (credentials, tokens, session cookies, OAuth permissions) is the most direct route into modern infrastructure.

The cognitive bias remained. The tooling investment followed the historical model rather than the current threat distribution.

What makes this pattern visible today is that some of the most fundamental controls still have not reached baseline adoption. OS hardening and least privilege are considered advanced practice in more mature organisations. They are not standard. I have seen the difference they make to operational resilience in environments where they are applied correctly, and I have seen how much attack surface gets given away in environments where they are not. The lesson from ransomware was applied to patching. The equivalent lesson from identity-based attacks has not yet been fully absorbed.

CTEM is the attempt to correct that misalignment: not by abandoning vulnerability management but by placing it inside a broader framework that reflects how breaches start.

CTEM vs Vulnerability Management: Two Different Questions

Traditional vulnerability management asks: what software in our environment has known weaknesses?

CTEM asks: what paths could an attacker take through this environment right now, using any technique they would use?

Those are different questions. They produce different answers. And they require different controls.

A vulnerability management programme will tell you about unpatched CVEs, outdated software versions, and known exploitable conditions in the systems it scans. That is valuable information. It is not the complete picture.

It will not tell you that your Global Admin accounts have no PIM enforcement. It will not surface the fact that your Intune console is reachable from any unmanaged device with the right credentials. It will not flag that a service account created during a migration project three years ago still holds excessive permissions and has never been reviewed. It will not detect that your helpdesk process has no callback verification, making it viable for vishing.

None of those are software vulnerabilities. All of them are on the attack path.

The MITRE ATT&CK framework is useful here. CTEM maps exposures to the techniques an adversary would use: initial access methods, lateral movement paths, privilege escalation routes, persistence mechanisms. A vulnerability scanner maps to a subset of Initial Access (specifically software exploitation). CTEM maps across the entire framework, including the social engineering, credential access, and defence evasion techniques that dominate modern incident data.

I have had this conversation with security teams across vendor engagements for years. The ones who understand their risk posture best are not necessarily the ones with the highest patch rates. They are the ones who can answer: if an attacker had valid credentials for a mid-level account right now, what could they reach? In practice, the answer is almost always more than the team expects. I see this regularly: a low-privilege account with no special entitlements able to do extensive enumeration and discovery across the environment because permissions were never hardened after initial deployment. The attacker is not doing anything technically impressive. They are walking through doors that were left open. That is not a CVE. It is a configuration failure that gives attack surface visibility away for free.

That is the question CTEM is built to answer.

The Five CTEM Stages Without the Vendor Pitch

Every CTEM vendor will describe these stages slightly differently, and they will map their product to every one of them. Here is what the stages mean operationally, without the sales layer.

Scoping defines what matters. Not every asset and not every technique is equally relevant. Scoping means identifying your most valuable targets, the attack paths most likely to reach them, and which parts of the attack surface are in scope for continuous monitoring. It is a business decision before it is a technical one: which systems, if compromised, would cause the most damage?

The failure mode I see most often is treating scoping as an IT asset inventory exercise. It is not. A list of critical assets is not the same as a map of what an attacker would need to reach them. Attackers need two things above everything else: the ability to move freely through the network and credential access as the enabler for that movement. Overly permissioned users are still a widespread and underaddressed problem. In many environments I have worked with, a reasonably positioned account can traverse significant portions of the network simply because permissions were never hardened after the initial deployment. That is the attack surface being given away before the attacker has done anything sophisticated. Scoping that does not account for lateral movement paths and credential access routes is not scoping from the attacker's perspective. It is scoping from the IT team's perspective, which is a different map.

Discovery is continuous rather than periodic. It surfaces new assets, changed configurations, newly exposed services, and drift from known-good states. Shadow IT, forgotten cloud storage buckets, unmanaged devices, third-party integrations with excessive permissions.

What discovery also finds, and this is the category most teams underestimate, is what I would call shadow configuration: settings that were changed during troubleshooting or deployment and never restored, controls with gaps in coverage that nobody mapped, policy exceptions that were approved temporarily and never reviewed. A technician disables a security control to resolve a software issue. The issue gets fixed. They move on. Three months later, an attacker benefits from a gap that the team had no idea existed. Discovery in a CTEM programme finds these before an attacker does. A point-in-time assessment finds them only if it happens to run after the change and before the incident.

Prioritisation is where CTEM departs most sharply from traditional vulnerability management. Vulnerability management has evolved beyond generic CVSS scores. EPSS (Exploit Prediction Scoring System) adds real-world exploitation likelihood to the picture, which is a meaningful improvement: a high CVSS score with low EPSS weight is less urgent than a medium score being actively exploited in the wild. But EPSS still only scores known vulnerabilities with CVE identifiers. A misconfigured admin portal, an over-permissioned service account, a helpdesk process with no callback verification: none of those have EPSS scores. They have no CVE to score against.

Even within the CVE-centric view, prioritisation has a third dimension that vulnerability management rarely captures: compensating control effectiveness. A critical CVE on a device where the EDR is properly configured and demonstrably blocking exploitation attempts carries a different real-world risk profile than the same CVE on a device with a misconfigured policy or a coverage gap. I have seen environments where a vulnerability was flagged critical across an entire estate, but exploit validation revealed the EDR was blocking it on most devices and failing silently on a subset. Those outlier devices were the actual exposure. The CVSS score was the same across all of them.

Two organisations can have the same CVE and face very different levels of risk depending on their architecture, their controls, and whether those controls have been validated.

Validation tests whether controls work under real conditions. This is the stage most vulnerability management programmes skip entirely. Having a control deployed and having it function correctly against a real attack technique are two different things. Validation includes automated security testing, attack path simulation, and adversarial emulation mapped to MITRE ATT&CK. It answers the question most frameworks avoid: would this control stop an actual attack? And for the devices where the answer is no, the outliers that assumed protection does not cover, validation is what surfaces them before an attacker does.

This is the defence-in-depth principle applied to exposure management. No single control is guaranteed. Patching fails or is delayed. The compensating control becomes the functional line of defence, but only if it is working correctly on the right assets, which requires validation to confirm rather than assume.

Mobilisation coordinates the response. Prioritised risk information has no value if it cannot get to the people who own the systems and can act on it. Mobilisation means translating CTEM findings into actionable remediation tasks, routing them to the right owners, tracking progress, and confirming that remediation has changed the risk calculation.

How CTEM Makes Vulnerability Management More Powerful

Vulnerability management is not the wrong approach. It is an incomplete one when used in isolation.

Unpatched CVEs create attack paths. A CTEM programme incorporates vulnerability data as one input into the broader risk picture. The question changes from "what CVEs exist in our environment" to "which of these CVEs are on an exploitable path to something that matters, given our specific architecture and current controls?"

To be clear: a deprioritised CVE still needs patching. CTEM does not remove that obligation. What changes is the order. Two systems, same CVE, same score: one has a compensating control confirmed to be blocking exploitation with no viable attack path to anything critical. The other sits on an exposed segment with an EDR coverage gap and a direct route to sensitive data. Same vulnerability. Very different exposure. The CTEM approach reorders the queue by actual risk in your environment, not by the score that applies equally to both.

CTEM also extends the risk picture to the exposures that vulnerability management cannot see: identity and access configurations, conditional access policy gaps, over-permissioned accounts, third-party access that has not been reviewed, control effectiveness degradation over time. The Stryker and Scattered Spider incidents were not CVSS failures. They were failures of identity governance, access design, and process controls, exactly the category of exposure CTEM's continuous discovery and validation phases are designed to surface.

Patching remains important. CTEM does not replace it: it contextualises it. Which CVEs are on an active attack path to a high-value asset? Which are mitigated in practice by a compensating control that validation has confirmed is working? Which devices were assumed to be protected but validation revealed as outliers? That is a richer and more accurate view of real-world risk than any score alone provides, and it is the view that tells you where to act first.

The organisations I have worked with that manage their risk posture most effectively are not choosing between vulnerability management and CTEM. They are using vulnerability data inside a CTEM programme, with the prioritisation and validation layers that give that data operational meaning.

Patch-and-scan remains necessary. It is no longer sufficient.

How to Start Without a New Platform

CTEM is an approach before it is a product purchase. Organisations can begin applying CTEM thinking with tooling they already have.

Start with scoping. Identify the three to five systems or data stores that, if compromised, would cause the most significant business impact. Map who has access to them, what the access paths look like, and what controls sit between an external attacker and those assets. That mapping exercise alone will surface exposures that vulnerability scanning has never flagged.

Run a privilege audit. Enumerate every account holding administrative rights in your environment, including service accounts and third-party integrations. Identify standing privilege that should be time-bound, accounts that have not been used in 90 days, and role assignments that do not map to a current job function. This is the identity exposure layer that CTEM's discovery phase is designed to find continuously. Starting manually establishes the baseline.

Test one control. Pick a specific detection or prevention control and run a tabletop or technical exercise against it using a MITRE ATT&CK technique relevant to your sector. Does the control fire? Is the alert routed to someone who acts on it? Does anyone know what to do? Validation does not require a platform. It requires a question and the honesty to find out whether the answer is yes.

Once your CTEM programme is producing findings, getting those findings to the people who can act on them is the next challenge. Communicating security risk to executive audiences requires a different frame from operational reporting. Patch counts and MTTR figures do not translate into the decisions boards make. The information security metrics for executives guide covers how to connect exposure management findings to the language and reference frame boards use to make decisions.

The full CTEM maturity journey does eventually involve tooling. But the mindset shift, from point-in-time scanning to continuous exposure management, from CVE-centric to technique-centric, from "is the control deployed" to "does the control work", is available immediately, regardless of budget.

The Gap Between Patched and Protected

Conficker and WannaCry taught the industry that patching saves organisations. That lesson was true and it still applies. The mistake was treating it as the complete answer to a problem that has since changed shape.

The 2025 breach data shows credential abuse leading initial access, the human element present in 60% of incidents, and third-party involvement doubling. Scattered Spider brought down MGM with a phone call. Handala wiped Stryker's device fleet without touching a vulnerability. The npm ecosystem has been targeted repeatedly by attackers who need only one set of compromised maintainer credentials.

Vulnerability management tells you about the weaknesses in your software. CTEM tells you about the weaknesses in your security posture. You need both, and the second is the frame that gives the first operational meaning.

Patch rates tell you what you’ve fixed.

CTEM tells you what an attacker can still reach.

Most environments still haven’t answered the second question.

Key Resources:

- Scattered Spider: The Attack Chain, Hard Lessons, and What Comes Next

- Microsoft Intune Security: Hardening Privileged Access

- How Attackers Target npm Maintainer Accounts

- What is AzureHound? The Cloud Tool Threat Actors Use

- What Are Infostealers?

- Gartner: Implement a Continuous Threat Exposure Management Programme -- original 2022 framework introduction

- MITRE ATT&CK Framework

References:

- Verizon (2025). 2025 Data Breach Investigations Report. Credential abuse 22%, vulnerability exploitation 20% (34% increase), human element 60%, third-party involvement 30%.

- Tenable (2025). Cited in Verizon DBIR 2025: average time to patch edge device CVEs 209 days; average attacker time-to-exploitation 5 days.

- Gartner (2022). Implement a Continuous Threat Exposure Management Programme.

- Kevin Beaumont, Rafe Pilling / Sophos (2026). Stryker Handala attack vector identification.

- CISA / FBI Joint Advisory (2023). Scattered Spider threat actor profile.

- Google Threat Intelligence Group (2026). UNC1069 attribution, Axios npm supply chain attack.

- ALPHV/BlackCat (2023). Published account of MGM Resorts attack, September 14 2023.

Member discussion