Why I Built an AI Cybersecurity Learning Assistant

Learning cybersecurity is overwhelming. There are thousands of courses, certifications, tools, and frameworks competing for your attention. Most beginners spend more time deciding what to study than actually studying.

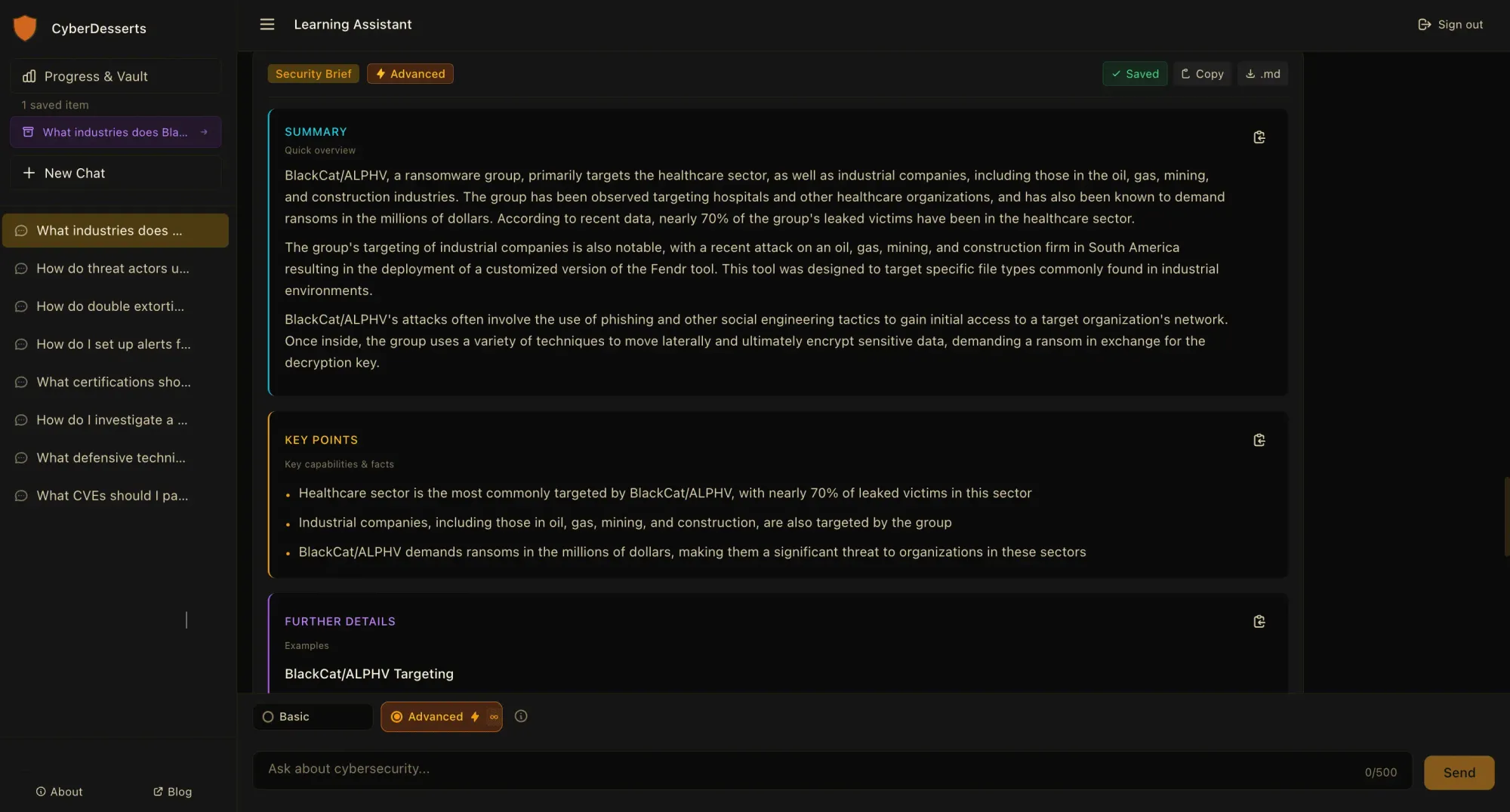

I built something to help. The CyberDesserts Learning Assistant is an AI trained on curated security knowledge: MITRE ATT&CK mappings, ransomware group intelligence, threat advisories, career guidance, and practical tutorials. Ask it anything about threats, defences, career paths, or security tools and get structured answers with sources you can verify.

But this post is not just about the tool. It is about what building it taught me, and why I think AI assistants will change how people learn cybersecurity.

Why Traditional Cybersecurity Learning Falls Short

Most cybersecurity education follows a familiar pattern: watch videos, read documentation, take notes, hope you remember it when you need it.

The problem is context. When you are troubleshooting a real incident or preparing for an interview, you do not need a 40-hour course. You need specific, actionable information right now. What MITRE ATT&CK techniques does LockBit use? What certifications matter for SOC analyst roles? How do I detect PowerShell attacks?

Traditional learning resources are not designed for this. They are designed for sequential consumption, not on-demand retrieval. I wanted something that could meet learners where they are, whether that is a complete beginner asking "what is ransomware" or a practitioner researching specific threat actor TTPs.

From Blog Search to Learning Assistant

The original idea was modest: "What if I could ask questions about my blog?" I had written dozens of articles on career development, threat intelligence, and hands-on tutorials. Making that knowledge searchable seemed useful.

That simple question turned into months of research and iteration. Building a RAG pipeline from scratch forced me to confront every AI challenge I had only understood theoretically. Prompt injection, data poisoning, hallucination mitigation. Writing about these threats is one thing. Defending against them is another.

What I Learned Building It

Data Quality Beats Model Size

I spent more time curating and parsing knowledge sources than I did on any visible feature.

The assistant draws from this blog, MITRE ATT&CK mappings, ransomware group profiles, industry advisories, and security reports. Structuring that data correctly, cleaning inputs, tagging metadata, preventing the model from confidently making things up. Every time I thought I had cracked it, I would ingest new data and watch the same hallucinations resurface.

The flashy parts of AI get attention. The boring data work determines whether the system actually helps people learn.

Teaching AI to Say "I Don't Know"

Getting the assistant to admit uncertainty was harder than getting it to sound authoritative.

LLMs want to give you an answer. Teaching a model to say "I don't have information on that" instead of fabricating something plausible took more prompt engineering than I expected.

This matters for security learning. If a learning assistant confidently tells you a CVE is patched when it is not, or explains a technique incorrectly, you learn the wrong thing. Worse, you might apply that wrong knowledge in your job.

Every response cites its sources so you can verify. Trust but verify applies to AI tutors too.

Prompt Engineering Is Part Science, Part Art

Small changes in how you instruct the model completely change response quality.

I have rewritten the core prompts dozens of times. Word choice matters. Instruction order matters. Explicit constraints matter more than implicit expectations. Each iteration taught me something new about how these models process instructions.

If you are learning about AI security, my approach build something. Even a small project teaches more than reading documentation.

How the Learning Assistant Works

The assistant generates structured Security Briefs in response to your questions. Each brief includes:

- Summary for quick context

- Key points with actionable information

- Tables mapping techniques, attack phases, or certification comparisons

- Source citations linking to the underlying knowledge

- Related topics for exploring connected concepts

- Follow-up questions to guide deeper learning

Ask about LockBit's ransomware-as-a-service model and you get a breakdown of affiliate structures, initial access techniques, and persistence methods mapped to MITRE ATT&CK. Ask about SOC analyst certifications and you get a comparison of relevant credentials with links to career guidance.

You can export any response as markdown to save for your notes or share with your team.

What You Can Learn

The knowledge base covers practical cybersecurity topics:

Threat Intelligence Ransomware groups, attack techniques, MITRE ATT&CK mappings, and current threat landscape analysis.

Career Development Certification comparisons, skill requirements by role, interview preparation, and career transition guidance. The assistant draws from many of the articles here including the Cybersecurity Skills Roadmap.

Technical Fundamentals Security tools, defensive techniques, incident response procedures, and hands-on implementation guidance.

AI Security Prompt injection, model vulnerabilities, AI governance, and emerging threats from AI-powered attacks. This connects to our broader AI security threats coverage.

The knowledge base is continuously expanding. Some topics have deeper coverage than others, and that will improve over time.

Honest Limitations

This is a first iteration. Like all AI systems, responses may occasionally contain inaccuracies. The model runs on limited resources, which means there are constraints on reasoning depth.

Do not rely on this for production security decisions without validation. Verify critical information from authoritative sources. I have built in safeguards, but no AI system is perfect.

If something seems off, it probably is. Trust your instincts and check the source. The citations are there for a reason.

Why This Matters for Learning Cybersecurity

The cybersecurity skills gap is real. Organisations cannot find enough qualified people, and aspiring professionals struggle to know where to start. Traditional education is slow, expensive, and often disconnected from what practitioners actually need to know.

AI assistants will not replace structured learning. You still need courses, labs, and hands-on practice. But they can accelerate the spaces between formal education: answering questions when you are stuck, providing context when you encounter unfamiliar concepts, and helping you explore topics at your own pace.

Building this reinforced something I already believed: you cannot secure what you do not understand. The same applies to learning. Understanding how AI works, including its limitations, makes you a better security professional.

Ask it something about threats, careers, tools, or techniques. See what works and what does not. Your feedback shapes what it becomes.

Report issues, suggest topics, or request features at info@cyberdesserts.com.

Published January 2026

Member discussion